It’s getting weird out there. You see a video of a world leader declaring war, or a celebrity endorsing a scam crypto token. Your gut says it’s fake, but your eyes… your eyes are convinced. That’s the unsettling power of modern AI-generated misinformation and deepfakes. They’re no longer clunky, easy-to-spot oddities. They’re sophisticated, scalable, and frighteningly persuasive.

So, what do we do? Throw our hands up? Hardly. The game has changed, and so must our defenses. This isn’t about basic “fact-checking” anymore—it’s about building a multi-layered digital immune system. Let’s dive into the advanced strategies that individuals, organizations, and frankly, all of us need to adopt.

Shifting from Detection to Provenance: The New Frontier

For years, the focus was on detection. Find the glitch in the Matrix—the unnatural blink, the weird shadow, the audio artifact. But here’s the deal: AI is getting better at not making those mistakes. It’s an arms race we can’t solely rely on. The smarter, more sustainable strategy is shifting towards content provenance and authentication.

Think of it like a birth certificate for digital content. Where was it created? By whom? Has it been altered? Technologies like cryptographic hashing and secure metadata (think C2PA standards—the Coalition for Content Provenance and Authenticity) allow creators to attach a tamper-proof seal to their work. Platforms can then display a simple icon: a checkmark showing the content’s origin is verified. It doesn’t tell you if something is true, but it tells you if it’s authentic. That’s a huge first step.

Strategy 1: Adopt a “Lateral Reading” Habit

Okay, this one’s for you, the person scrolling through feeds. Professional fact-checkers use a technique called lateral reading. Instead of deeply scrutinizing the content itself (which is what the disinformation wants you to do), you immediately open new browser tabs to check the source and the claims elsewhere.

See a shocking video? Don’t just watch it ten times looking for flaws. Immediately jump to a trusted news site or search for the event with keywords like “debunked” or “hoax.” You’re not judging the pond by staring into its murky depths; you’re getting a satellite view of the entire landscape. It’s the single most effective personal defense you can build, honestly.

Strategy 2: Implement Digital Watermarking & AI Fingerprinting

For creators and publishers, this is non-negotiable. Invisible digital watermarks can be embedded in images, video, and audio. More advanced is AI fingerprinting. Major AI companies are starting to embed subtle, imperceptible signals in their models’ outputs—a kind of digital DNA. Specialized scanners can then identify content generated by specific AI tools.

It’s not a silver bullet—bad actors can try to remove these—but it raises the cost and complexity of spreading undetected fakes. It makes wholesale, automated generation of credible misinformation much, much harder.

The Human-in-the-Loop: Why Critical Thinking is Still the Killer App

All this tech is great. But the most advanced strategy is, and always will be, nurturing a skeptical yet not cynical mindset. We have to train ourselves—and our communities—to pause before amplifying.

Ask these questions, every single time:

- Who benefits from me believing this? Follow the emotional or financial incentive.

- Does this make me feel an overpowering emotion immediately? Rage, fear, tribal pride? That’s often the point.

- Is this information available on multiple, credible platforms? Or is it siloed in one echo chamber?

It’s about emotional hygiene. AI-generated content is engineered for virality, which means it’s engineered to bypass our logical minds. Recognizing that gut-punch feeling as a potential red flag is a superpower.

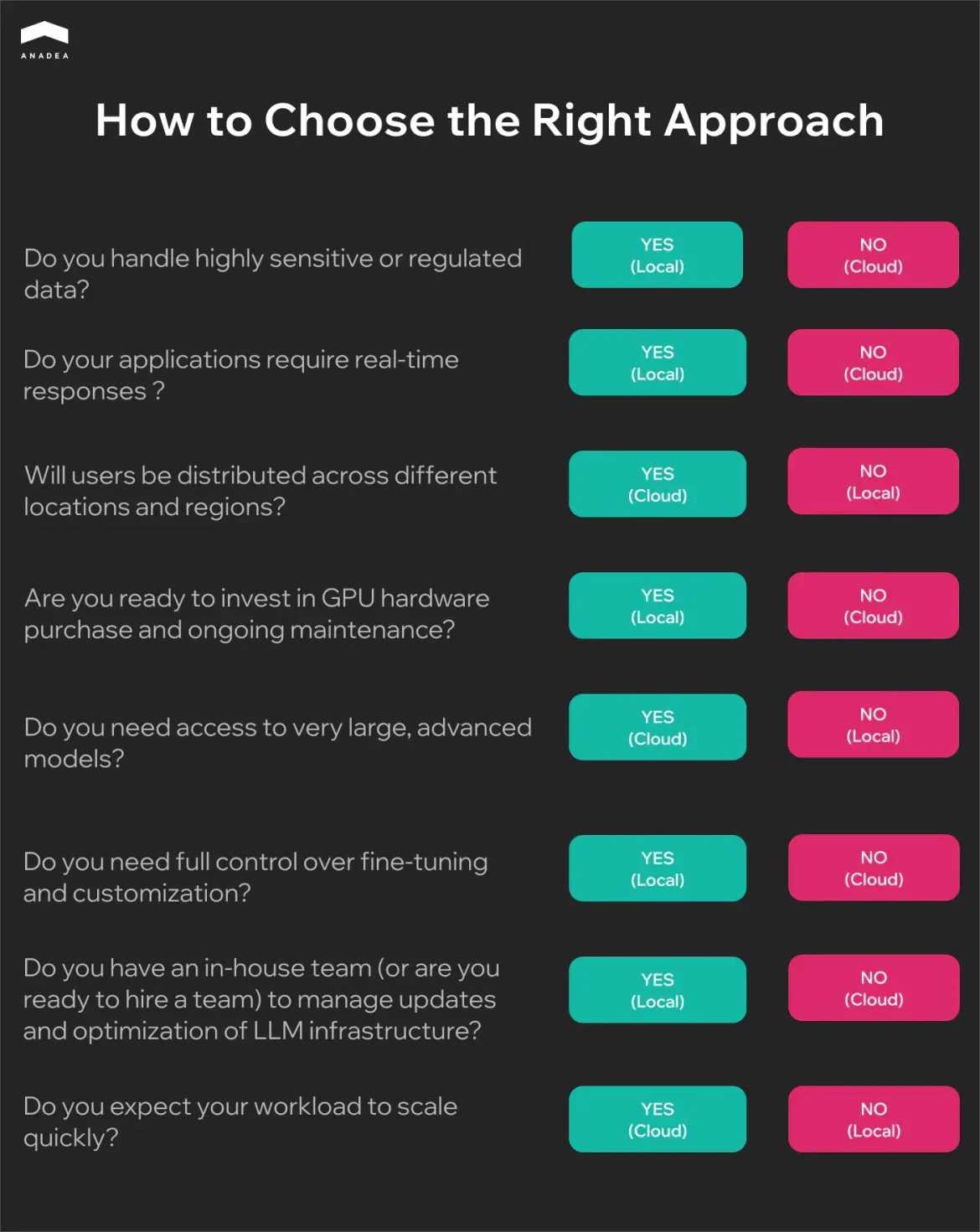

Strategy 3: Leverage Decentralized Verification Networks

This is where it gets futuristic. Imagine a network, not owned by any single government or corporation, where verified pieces of evidence (original photos, videos, documents) are logged on an immutable ledger (like a blockchain). Timestamps, geolocation, and device IDs are cryptographically sealed.

When a piece of content goes viral, it can be checked against this ledger. Does it match a verified original? Or is it a clone with malicious edits? This decentralized approach to verification builds trust through transparency and collaboration, rather than top-down authority. It’s early days, but it’s a promising path forward for combating deepfakes in real-time.

A Practical Toolbox: What You Can Do Today

Let’s get concrete. Here’s a mix of tools and tactics to add to your arsenal.

| Tool Type | Examples / Actions | Best For |

| Reverse Image Search | Google Lens, TinEye | Finding original context of photos/video stills. |

| Audio Analysis | Checking for unnatural pauses, consistent background noise. | Spotting cloned voices or AI-generated speech. |

| Metadata Checkers | Online EXIF data viewers | Seeing a file’s creation date, location, and device info. |

| Browser Plugins | Extensions that flag known fake news sites or altered media. | Real-time, low-friction warnings as you browse. |

| Platform Reporting | Using “False Information” reporting tools on social media. | Flagging content to platform moderators (imperfect, but necessary). |

Honestly, the most important tool isn’t listed: your network. Have a group chat where you can say, “Hey, saw this—seems off, can anyone verify?” Collective intelligence is incredibly resilient to AI-generated misinformation.

The Long Game: Building Societal Resilience

In the end, this isn’t just a technical problem. It’s a social one. Advanced strategies must include media literacy education integrated into every level of schooling—not as a one-off lesson, but as a core skill, like math or reading. We need to normalize questioning sources without accusing intent. And we have to support quality journalism, the very ecosystem that provides the verified facts we need to anchor ourselves.

The goal can’t be a perfect, fake-free internet. That’s impossible. The goal is resilience. To create an information environment where AI-generated falsehoods struggle to take root, spread slower, and cause less harm when they do. It means building a world where our trust is earned through verifiable provenance and consistent integrity, not just slick production value.

That future is less about winning a war against bots, and more about strengthening the human connections and critical faculties that make us… well, human. And that’s a fight worth engaging in, with every share, every pause, and every skeptical question we ask.